實作二: HDFS 指令操作練習

前言

- 此部份接續實做一

Content 1. 基本操作

1.1 瀏覽你HDFS目錄

/opt/hadoop$ bin/hadoop fs -ls

1.2 上傳資料到HDFS目錄

- 上傳

/opt/hadoop$ bin/hadoop fs -put conf input

- 檢查

/opt/hadoop$ bin/hadoop fs -ls /opt/hadoop$ bin/hadoop fs -ls input

1.3 下載HDFS的資料到本地目錄

- 下載

/opt/hadoop$ bin/hadoop fs -get input fromHDFS

- 檢查

/opt/hadoop$ ls -al | grep fromHDFS /opt/hadoop$ ls -al fromHDFS

1.4 刪除檔案

/opt/hadoop$ bin/hadoop fs -ls input /opt/hadoop$ bin/hadoop fs -rm input/masters

1.5 直接看檔案

/opt/hadoop$ bin/hadoop fs -ls input /opt/hadoop$ bin/hadoop fs -cat input/slaves

1.6 更多指令操作

hadooper@vPro:/opt/hadoop$ bin/hadoop fs

Usage: java FsShell

[-ls <path>]

[-lsr <path>]

[-du <path>]

[-dus <path>]

[-count[-q] <path>]

[-mv <src> <dst>]

[-cp <src> <dst>]

[-rm <path>]

[-rmr <path>]

[-expunge]

[-put <localsrc> ... <dst>]

[-copyFromLocal <localsrc> ... <dst>]

[-moveFromLocal <localsrc> ... <dst>]

[-get [-ignoreCrc] [-crc] <src> <localdst>]

[-getmerge <src> <localdst> [addnl]]

[-cat <src>]

[-text <src>]

[-copyToLocal [-ignoreCrc] [-crc] <src> <localdst>]

[-moveToLocal [-crc] <src> <localdst>]

[-mkdir <path>]

[-setrep [-R] [-w] <rep> <path/file>]

[-touchz <path>]

[-test -[ezd] <path>]

[-stat [format] <path>]

[-tail [-f] <file>]

[-chmod [-R] <MODE[,MODE]... | OCTALMODE> PATH...]

[-chown [-R] [OWNER][:[GROUP]] PATH...]

[-chgrp [-R] GROUP PATH...]

[-help [cmd]]

Generic options supported are

-conf <configuration file> specify an application configuration file

-D <property=value> use value for given property

-fs <local|namenode:port> specify a namenode

-jt <local|jobtracker:port> specify a job tracker

-files <comma separated list of files> specify comma separated files to be copied to the map reduce cluster

-libjars <comma separated list of jars> specify comma separated jar files to include in the classpath.

-archives <comma separated list of archives> specify comma separated archives to be unarchived on the compute machines.

The general command line syntax is

bin/hadoop command [genericOptions] [commandOptions]

Content 2. Hadoop 運算命令

2.1 Hadoop運算命令 grep

- grep 這個命令是擷取文件裡面特定的字元,在Hadoop example中此指令可以擷取文件中有此指定文字的字串,並作計數統計

/opt/hadoop$ bin/hadoop jar hadoop-*-examples.jar grep input grep_output 'dfs[a-z.]+'

運作的畫面如下:

09/03/24 12:33:45 INFO mapred.FileInputFormat: Total input paths to process : 9 09/03/24 12:33:45 INFO mapred.FileInputFormat: Total input paths to process : 9 09/03/24 12:33:45 INFO mapred.JobClient: Running job: job_200903232025_0003 09/03/24 12:33:46 INFO mapred.JobClient: map 0% reduce 0% 09/03/24 12:33:47 INFO mapred.JobClient: map 10% reduce 0% 09/03/24 12:33:49 INFO mapred.JobClient: map 20% reduce 0% 09/03/24 12:33:51 INFO mapred.JobClient: map 30% reduce 0% 09/03/24 12:33:52 INFO mapred.JobClient: map 40% reduce 0% 09/03/24 12:33:54 INFO mapred.JobClient: map 50% reduce 0% 09/03/24 12:33:55 INFO mapred.JobClient: map 60% reduce 0% 09/03/24 12:33:57 INFO mapred.JobClient: map 70% reduce 0% 09/03/24 12:33:59 INFO mapred.JobClient: map 80% reduce 0% 09/03/24 12:34:00 INFO mapred.JobClient: map 90% reduce 0% 09/03/24 12:34:02 INFO mapred.JobClient: map 100% reduce 0% 09/03/24 12:34:10 INFO mapred.JobClient: map 100% reduce 10% 09/03/24 12:34:12 INFO mapred.JobClient: map 100% reduce 13% 09/03/24 12:34:15 INFO mapred.JobClient: map 100% reduce 20% 09/03/24 12:34:20 INFO mapred.JobClient: map 100% reduce 23% 09/03/24 12:34:22 INFO mapred.JobClient: Job complete: job_200903232025_0003 09/03/24 12:34:22 INFO mapred.JobClient: Counters: 16 09/03/24 12:34:22 INFO mapred.JobClient: File Systems 09/03/24 12:34:22 INFO mapred.JobClient: HDFS bytes read=48245 09/03/24 12:34:22 INFO mapred.JobClient: HDFS bytes written=1907 09/03/24 12:34:22 INFO mapred.JobClient: Local bytes read=1549 09/03/24 12:34:22 INFO mapred.JobClient: Local bytes written=3584 09/03/24 12:34:22 INFO mapred.JobClient: Job Counters ......

- 接著查看結果

/opt/hadoop$ bin/hadoop fs -ls grep_output /opt/hadoop$ bin/hadoop fs -cat grep_output/part-00000

結果如下

3 dfs.class 3 dfs. 2 dfs.period 1 dfs.http.address 1 dfs.balance.bandwidth 1 dfs.block.size 1 dfs.blockreport.initial 1 dfs.blockreport.interval 1 dfs.client.block.write.retries 1 dfs.client.buffer.dir 1 dfs.data.dir 1 dfs.datanode.address 1 dfs.datanode.dns.interface 1 dfs.datanode.dns.nameserver 1 dfs.datanode.du.pct 1 dfs.datanode.du.reserved 1 dfs.datanode.handler.count 1 dfs.datanode.http.address 1 dfs.datanode.https.address 1 dfs.datanode.ipc.address 1 dfs.default.chunk.view.size 1 dfs.df.interval 1 dfs.file 1 dfs.heartbeat.interval 1 dfs.hosts 1 dfs.hosts.exclude 1 dfs.https.address 1 dfs.impl 1 dfs.max.objects 1 dfs.name.dir 1 dfs.namenode.decommission.interval 1 dfs.namenode.decommission.interval. 1 dfs.namenode.decommission.nodes.per.interval 1 dfs.namenode.handler.count 1 dfs.namenode.logging.level 1 dfs.permissions 1 dfs.permissions.supergroup 1 dfs.replication 1 dfs.replication.consider 1 dfs.replication.interval 1 dfs.replication.max 1 dfs.replication.min 1 dfs.replication.min. 1 dfs.safemode.extension 1 dfs.safemode.threshold.pct 1 dfs.secondary.http.address 1 dfs.servers 1 dfs.web.ugi 1 dfsmetrics.log

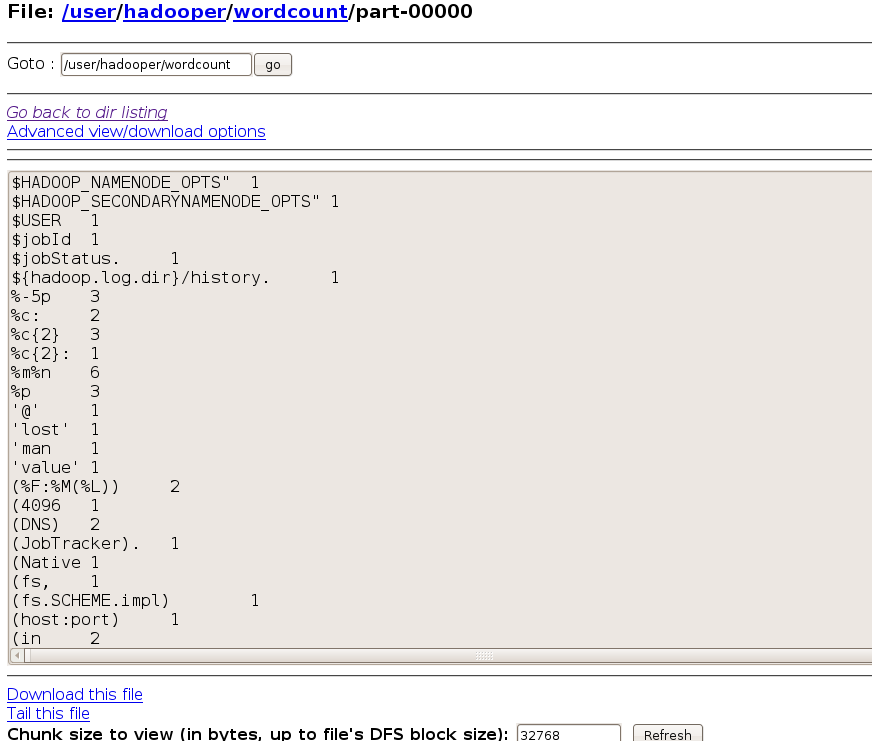

2.2 Hadoop運算命令 WordCount

- 如名稱,WordCount會對所有的字作字數統計,並且從a-z作排列

/opt/hadoop$ bin/hadoop jar hadoop-*-examples.jar wordcount input wc_output

檢查輸出結果的方法同2.1的方法

2.3 更多運算命令

可執行的指令一覽表:

aggregatewordcount An Aggregate based map/reduce program that counts the words in the input files. aggregatewordhist An Aggregate based map/reduce program that computes the histogram of the words in the input files. grep A map/reduce program that counts the matches of a regex in the input. join A job that effects a join over sorted, equally partitioned datasets multifilewc A job that counts words from several files. pentomino A map/reduce tile laying program to find solutions to pentomino problems. pi A map/reduce program that estimates Pi using monte-carlo method. randomtextwriter A map/reduce program that writes 10GB of random textual data per node. randomwriter A map/reduce program that writes 10GB of random data per node. sleep A job that sleeps at each map and reduce task. sort A map/reduce program that sorts the data written by the random writer. sudoku A sudoku solver. wordcount A map/reduce program that counts the words in the input files.

Content 3. 使用網頁Gui瀏覽資訊

Content 4. 練習

Last modified 17 years ago

Last modified on Mar 29, 2009, 1:50:12 AM

Attachments (1)

- 2009-03-24-135001_872x741_scrot.png (59.1 KB) - added by waue 17 years ago.

Download all attachments as: .zip